Bruno Volckaert, Bjorn Claes, Laurens D’Hooge, Miel Verkerken, Tim Wauters and Filip De Turck, IDLab-UGent-IMEC

Published: 13 Nov 2019

Abstract

Due to the increasing dependence on a company’s internal network for the exchange of information, protecting these networks is key. One essential defense is using a network intrusion detection system (NIDS). Most commercial NIDS are signature-based, meaning their effectiveness is highly dependent on the threat database used. To overcome this, we present anomaly detection incorporating various machine-learning models, including multilayer perceptrons, convolutional neural networks and residual networks. Promising results are obtained on the NSL-KDD data set with ROC-AUC scores above 0.93 on deep convolutional neural networks.

1. Introduction

Ever since information systems became critical assets, companies have been investing in cybersecurity to protect their infrastructure and information against hacking. One of the key defenses is a NIDS, monitoring the company’s network to detect suspicious behavior. However, most commercial NIDSs are signature-based, meaning their effectiveness is dependent on the threat signatures used. A more effective NIDS capable of dealing with unexpected threats must be designed. Key is the ability to efficiently assess normal and acceptable behavior of the messaging on the company network, and to quickly detect deviations that indicate suspicious behavior. This paper investigates several machine-learning approaches to improve intrusion detection systems [1] by recognizing uncharacteristic and suspicious network traffic.

2. Intrusion Detection Systems

In this paper, four types of attacks are considered: Denial-of-Service, probing, remote-to-local and user-to-root attacks [2].

2.1 Intrusion detection systems

Different types of IDSs can be distinguished based on the following criteria:

- By IT entity: categorized in two different types depending on the system being monitored: network-based IDSs and host-based IDSs. The first passively monitor all traffic on the internal network. The latter examine a single computing device by analyzing logs, characteristics of processes and other information to identify suspicious behavior.

- By detection methodology: in a signature-based approach, signatures in observed events are compared with a database of known malicious signatures. In stateful protocol analysis, threats are detected by comparing observed messages with the definitions of benign protocol activity in order to identify deviations. Finally, anomaly-based methods detect malicious packets by comparing them to a baseline model that represents the normal state of an IT entity.

Anomaly-based IDSs can be further classified into three distinct categories: statistical-based, knowledge-based and machine-learning-based. In statistical-based IDSs, network traffic is captured and used to create a model that reflects the normal stochastic behavior of the network. Thereafter, malicious behavior is detected by comparing captured network events with the baseline and classifying them as anomaly when they deviate significantly. In knowledge-based models, a set of rules is used to classify network traffic as either normal traffic or outliers. Finally, machine-learning-based IDSs also create models to classify network packets. The main difference is this is not limited to stochastic properties and does not necessarily use thresholds to classify packets [3,4].

3. NIDS Design

In the design of our NIDS, different techniques have been applied, each affecting the working and effectiveness of the IDS. Three requirements have been identified that are considered essential: detection effectiveness, time required to make a prediction for a data sample and time required to train the model.

3.1 Model selection

In the first step of the evaluation procedure, the model to be evaluated must be selected [2, 5]. The following options have been investigated:

- Logistic regression model: a classification model calculating a weighted sum over the features of a data sample as input in a softmax function.

- Random forest ensemble: combines multiple decision trees and a majority voting method to perform classification.

- Multilayer perceptron: neural network consisting of several layers of perceptrons which are binary classification models that consist of a weighted sum of features and a non-linear activation function.

- Convolutional neural network: neural network with convolutional neurons that consist of a kernel learning local features in the input data, a convolution operation and a non-linear activation function.

- Residual network: advanced neural network containing several residual blocks, each consisting of a shallow neural network and an identity mapping.

- ResNeXt network: extension of the residual network in which the convolutional neural network is split into several smaller convolutional neural networks of the same depth.

3.2 Data set selection

The NSL-KDD data set is one of the most frequently used data sets to train and validate anomaly-based NIDSs, introduced by Tavallaee et al. [6] to solve some of the issues in the KDD’99 data set. The data set itself contains 125,973 train samples and 22,544 test samples that all consist of 41 features, three of which are categorical. Since most models are only able to learn numerical values, those three features are converted to their one-hot encoded representation, which leads to a new data set with 122 features.

3.3 Feature engineering and hyperparameter tuning

In the NSL-KDD data set, features are not presented in such a way that they only contain relevant and high-discriminating information. As a result, machine-learning models cannot reach their full potential because they consider irrelevant correlations between features and redundant information. To overcome this, two techniques have been identified: a feature selection algorithm with a forward search approach and an autoencoder. In the feature selection algorithm, redundant and irrelevant data is removed by subdividing features into groups of a specific size and feeding them to the model per group. In each iteration, the group that leads to the highest accuracy is merged with the groups that have already been selected, provided that the improvement is greater than a specified threshold. Subsequently, it was decided to use a deep symmetrical autoencoder to learn advanced projections between the features in order to make the data more discriminatory. A deep symmetrical autoencoder is a neural network consisting of an encoder and a decoder, the encoder being a multilayer perceptron (MLP) in which the number of nodes in a layer decreases with its depth in the network and the decoder being the mirror image of the encoder. In this paper, the decision was made to train a deep symmetrical autoencoder with an encoder depth of four layers that compresses the 122 original features to 40.

To select the hyperparameters in order to achieve the highest accuracy possible, two approaches have been tested: grid search and Bayesian optimization. Grid search is a naive algorithm that tests any combination of hyperparameters to select the one that leads to the best detection accuracy. Bayesian optimization, on the other hand, is a more advanced technique that uses a Gaussian Process to learn the cost function in relation to the model’s hyperparameter combinations to again select the combination that leads to the best detection accuracy.

3.4 Model training and validation

Finally, the models are trained and evaluated. The evaluation platform consists of a single computing device containing an Intel i7-7700 processor with four cores, a clock rate of 3.60 GHz, 8 MB of cache and 32 GB of DDR4 SDRAM, and a GeForce RTX-2070 GPU with 8 GB of GDDR6 SDRAM. Python 3.7.1 is chosen as the implementation language due to its wide variety of machine-learning libraries, three of which are used here: Keras with a TensorFlow backend to implement the GPU-enabled neural networks, scikit-optimize to implement Bayesian optimization and scikit-learn to implement the other models.

4. Evaluation

The models are checked against train and test time constraints and their overall accuracy.

4.1 Training time

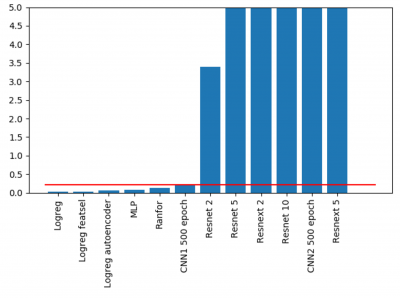

In this paper, a training time threshold is configured to a maximum of 30 seconds per epoch for neural networks and 10 minutes for other models. The difference is explained by the fact that neural networks can already be used after they have completed one epoch, albeit at lower accuracy.

In Fig. 1, the train time of the models is compared. All models were trained on the nonstandard NSL-KDD data set and the train time of the neural networks is expressed per 20 epochs. Half of the models do not meet this constraint, including all designed residual and ResNeXt networks. This is not unexpected, since these models require many calculations.

4.2 Detection time

It is essential for a NIDS to detect malicious behavior as quickly as possible. Consequently, it was decided that a single-node NIDS must be able to detect anomalies within 225 milliseconds. Figure 2 shows half the models do not meet this requirement, including all residual and ResNeXt networks and one of the convolutional neural networks. This can again be attributed to the number of calculations that must be performed during prediction.

4.3 Accuracy

Finally, the network intrusion detection system must detect all attacks on the network and normal behavior must be ignored. Therefore, accuracy is vital. As can be observed in Fig. 3, the convolutional neural network with one kernel layer, the residual network with two residual blocks, and the residual network with five residual blocks achieve the highest effectiveness with a Matthews Correlation Coefficient score of 0.65+.

The highest effectiveness is achieved for a residual network with two residual blocks and an initial block consisting of a CNN with two core layers, a batch normalization layer and a ReLU layer. However, since this does not meet train and prediction time constraints, the convolutional neural network with one kernel layer is selected as the best model.

5. Conclusion

This paper proposed an NIDS capable of detecting unknown attacks in a fast and efficient manner. Three essential requirements have been identified that a machine-learning-based NIDS must meet: accuracy, time required to make a prediction for a data sample and the time required to train the model. Different models were evaluated based on these requirements. The highest effectiveness is achieved by a residual network with two residual blocks and an initial block consisting of a CNN with two core layers, a batch normalization layer and a ReLU layer. However, since this model does not meet train and prediction time constraints, the convolutional neural network with one kernel layer is proposed as the best fit. Convolutional neural networks offer an exciting new avenue of research into next-generation NIDS.

References

- L.P. Dias et al., “Using artificial neural network in intrusion detection systems to computer networks,” in 2017 9th Computer Science and Electronic Engineering Conference (CEEC), pp. 145–150. 2017.

- K. K. Patel and B. V. Buddhadev, “Machine learning based research for network intrusion detection: a state-of-the-art.,” Int’l J. of Information & Network Security (IJINS), vol. 3, no. 3, pp. 1–20, 2014.

- P. Garcıa-Teodoro et al., “Anomaly-based network intrusion detection: techniques, systems and challenges,” Computers and Security, vol. 28, no. 1-2, pp. 18–28, 2009.

- B. G. Atli et al., “Anomaly-based intrusion detection using extreme learning machine and aggregation of network traffic statistics in probability space,” Cognitive Computation, vol. 10, no. 5, pp. 848–863, 2018.

- P. A. Alves Resende and A. C. Drummond, “A survey of random forest based methods for intrusion detection systems,” ACM Computing Surveys, vol. 51, no. 3, 2018.

- M. Tavallaee et al., “A detailed analysis of the KDD CUP 99 data set,” IEEE Symposium. Computational Intelligence for Security and Defense Applications, CISDA, pp. 53–58, 2009

Authors